RoboDuet: Learning a Cooperative Policy for Whole-body Legged Loco-Manipulation

Fully leveraging the mobile manipulation capabilities of a quadruped robot equipped with a robotic arm is non-trivial, as it requires controlling all degrees of freedom (DoFs) of the quadruped robot to achieve effective whole-body coordination. In this letter, we propose a novel framework RoboDuet, which employs two collaborative policies to realize locomotion and manipulation simultaneously, achieving whole-body control through mutual interactions. Beyond enabling large-range 6D pose tracking for manipulation, we find that the two-policy framework supports zero-shot transfer across quadruped robots with similar morphology and dimensions in the real world. Our experiments demonstrate that RoboDuet achieves a 23% improvement in success rate over the baseline in challenging loco-manipulation tasks employing whole-body control.

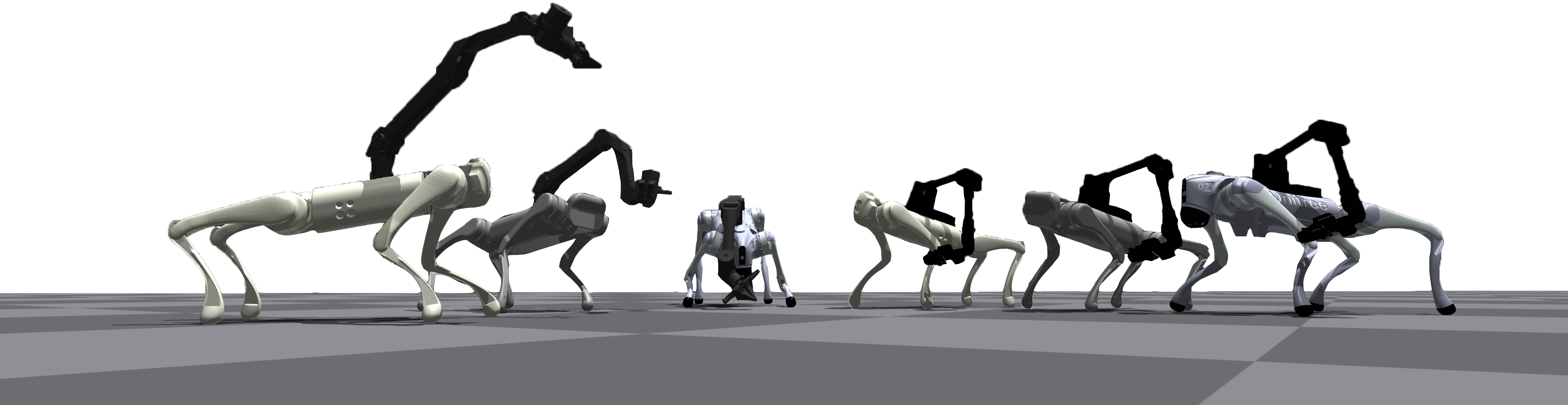

Cooperative policy for whole-body control. RoboDuet consists of a loco policy for locomotion and an arm policy for manipulation. The two policies are harmonized as a whole-body controller. Specifically, the loco policy adjusts its actions accordingly by following instructions from the arm policy. The goal of the loco policy \( \pi_{loco} \) is to follow a target command \(\mathbf{c_t} \). The goal of the arm policy \( \pi_{arm} \) is to accurately track the 6-DoF pose. The actions of the arm policy consist of two parts: the first six actions \(a^{arm^J}_t \in \mathbb{R}^6\) represent the target joint position offsets corresponding to six arm joint actuators. The rest part of the arm policy \( a_t^{arm^G} \) is used to replace orientation commands, providing additional degrees of freedom for end-effector tracking to cooperate with the loco policy.

Two stage training. In order to achieve both robust locomotion ability and flexible manipulation ability, we adopted a two-stage training strategy. Stage 1 focuses on obtaining the robust locomotion capability, which design is inspired by the powerful blind locomotion algorithm. Stage 2 aims to coordinate locomotion and manipulation to achieve whole-body large-range mobile manipulation, when the arm policy will be activated simultaneously with all the robotic arm joints.

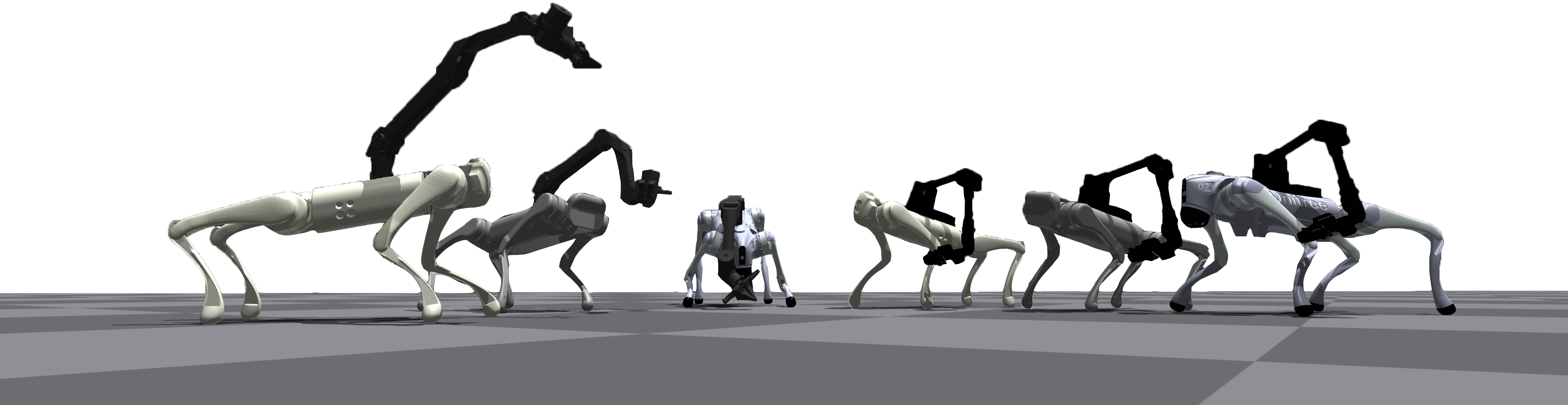

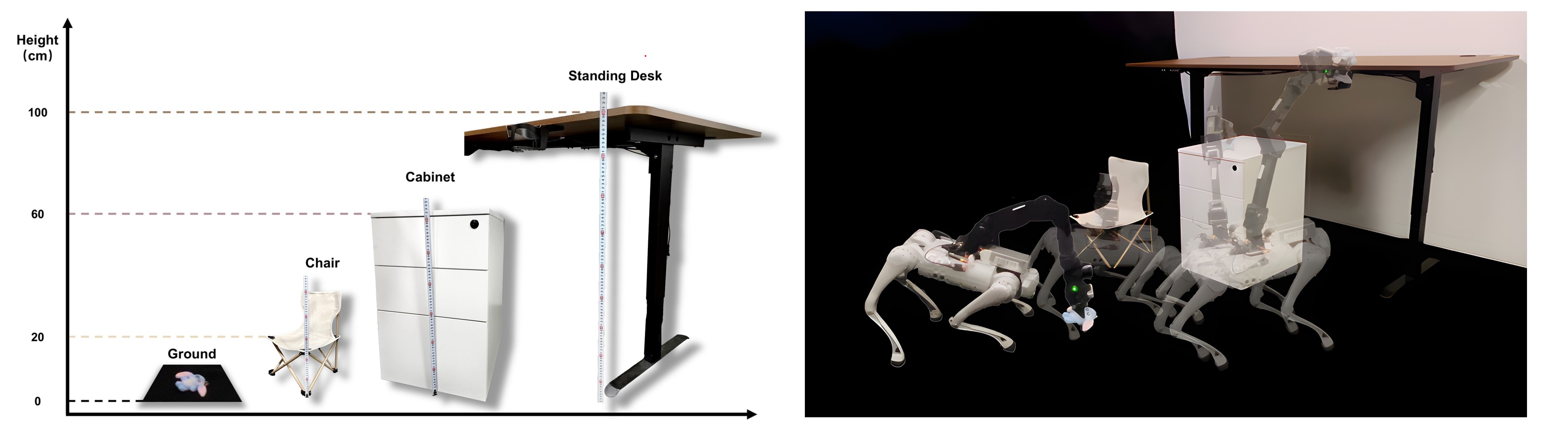

Given a series of challenging target poses, RoboDuet enables the legged robot to respond rapidly to the arm's guide, adjusting body posture to coordinate and maximize proximity to the target. This demonstrates robust whole-body control capabilities.

In the case of maintaining the manipulator's target pose, RoboDuet is capable of traversing slopes, gravel, and other complex terrains, and even enables backward traversal of such terrains.

We demonstrate the capability of RoboDuet to perform manipulation during locomotion through the door opening task, where the door handle height is 100 cm.

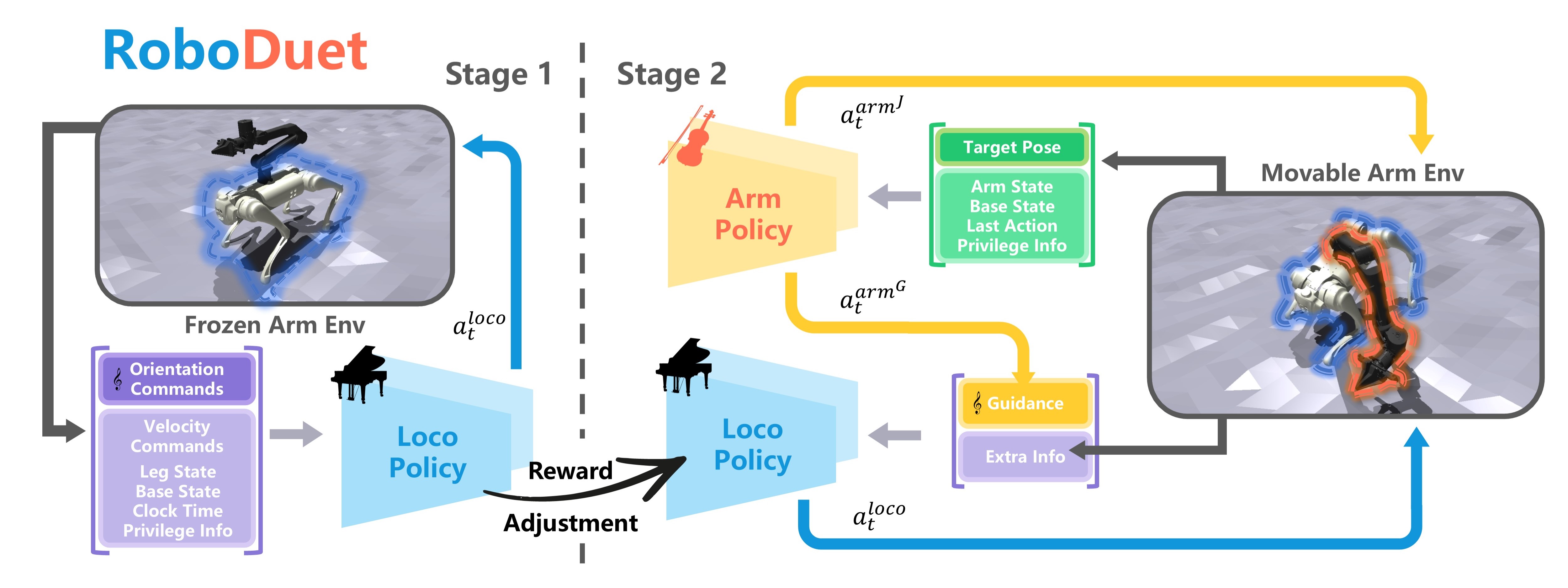

To demonstrate the robot's mobile manipulation capabilities, we tasked the robot with transporting objects across different heights, specifically targeting four representative levels: ground (0 cm), chair (20 cm), cabinet (60 cm), and standing desk (100 cm). Results indicate that RoboDuet effectively utilizes whole-body control to accomplish these mobile manipulation tasks

RoboDuet achieves a 23% improvement in success rate for more challenging mobile manipulation tasks compared to an adaptive floating base + inverse dynamics (AFB+ID) controller baseline.

To evaluate the zero-shot transferability across different quadruped robots, we directly deployed policies trained on the Go1+ARX5 configuration onto the Go2+ARX5, which has a 14.7% increase in base's weight. Using VR device to send identical target poses to both systems, results demonstrate that both configurations effectively exhibit agile 6D pose tracking and robust whole-body control capabilities.

Whole-body control allows the robot to wield the hammer with greater striking force.

Agile 6D pose manipulation enables the robot to regrasp dropped objects.

Furthermore, by providing a series of discrete target poses during the quadruped robot's movement, the system effectively adapts its posture to each target while maintaining stable locomotion throughout the whole process.

@misc{pan2024roboduetwholebodyleggedlocomanipulation,

title={RoboDuet: Learning a Cooperative Policy for Whole-body Legged Loco-Manipulation},

author={Guoping Pan and Qingwei Ben and Zhecheng Yuan and Guangqi Jiang and Yandong Ji and Shoujie Li and Jiangmiao Pang and Houde Liu and Huazhe Xu},

year={2024},

eprint={2403.17367},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2403.17367},

}